Why RAG Matters

Large Language Models are powerful, but they hallucinate, go stale, and lack access to private data. Retrieval-Augmented Generation (RAG) solves this by grounding LLM responses in retrieved evidence — pulling relevant documents from a knowledge base before generating an answer.

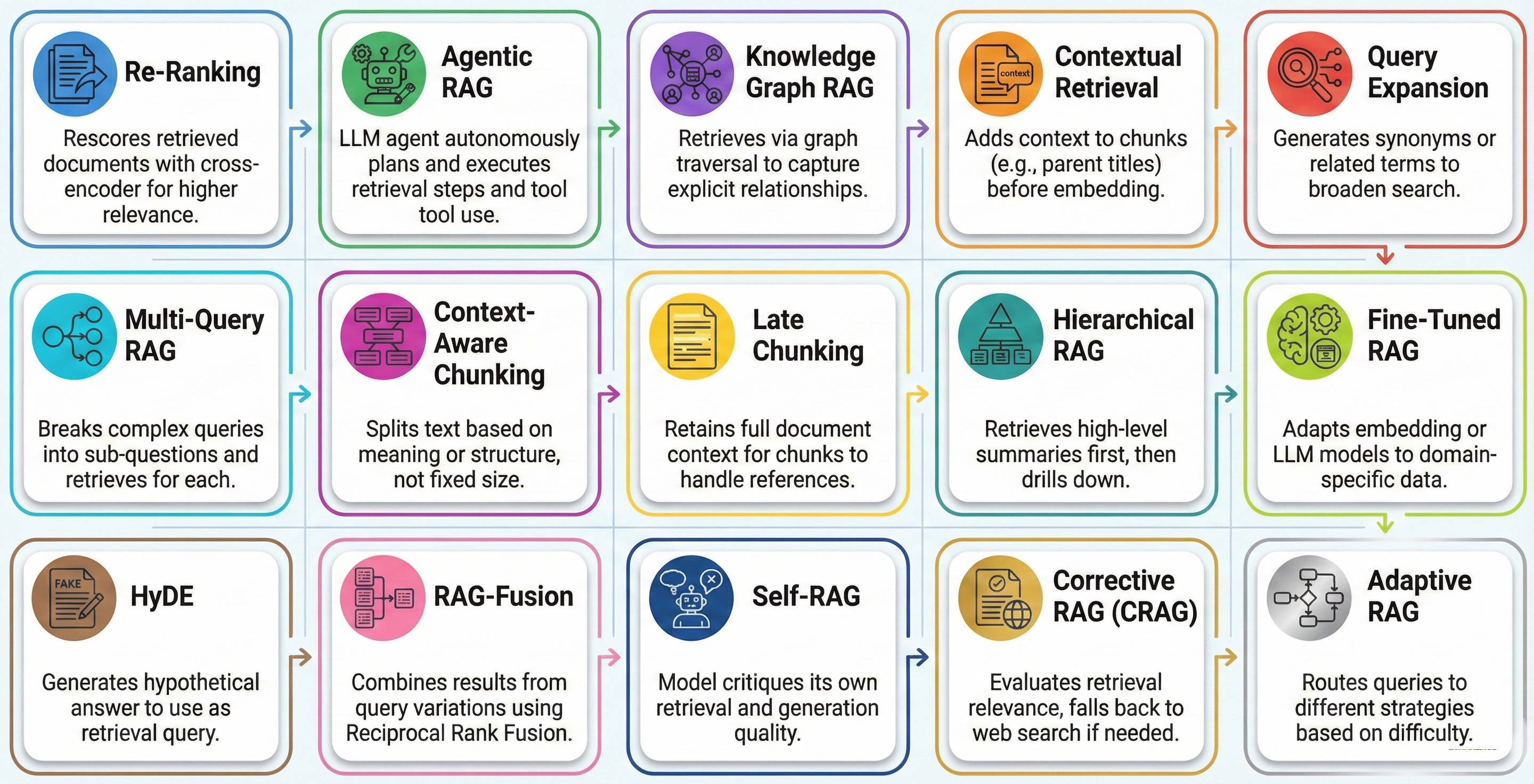

But not all RAG is created equal. Naive “retrieve-and-generate” pipelines break down when queries are ambiguous, documents are long, or the knowledge base is noisy. This repository explores 15 distinct RAG strategies, each addressing a specific weakness in the basic pattern.

Every strategy includes a Python implementation under src/ and an architecture diagram under architecture/.

Quick Start

git clone https://github.com/vchirrav/ml-rag-strategies.git cd ml-rag-strategies pip install -r requirements.txt export OPENAI_API_KEY="your-api-key-here" # Never hardcode API keys

See requirements.txt for the full dependency list. All samples read credentials from environment variables — never hardcode secrets.

The 15 Strategies

Re-Ranking

Use case: Customer Support

Combines fast vector search with a cross-encoder scoring pass to surface the most relevant results from an initial candidate set. The retriever casts a wide net, then the re-ranker applies fine-grained relevance scoring to reorder results before they reach the LLM.

Agentic RAG

Use case: Research Assistant

An LLM agent autonomously decides when and what to retrieve. Rather than always retrieving on every query, the agent reasons about whether retrieval is needed, formulates targeted queries, and can perform multi-step lookups with adaptive planning.

Knowledge Graph RAG

Use case: Medical Q&A

Structures documents as entity-relationship graphs and retrieves via graph traversal rather than vector similarity alone. Entities and their relationships are extracted during indexing, enabling multi-hop reasoning across connected concepts.

Contextual Retrieval

Use case: Legal Document Search

Prepends LLM-generated context summaries to each chunk before embedding. This preserves document-level meaning that would otherwise be lost during chunking, dramatically improving retrieval accuracy for context-dependent content.

Query Expansion

Use case: E-Commerce Search

Rewrites the user query into multiple phrasings and retrieves for each variant, then deduplicates results. This bridges the vocabulary gap between how users ask questions and how information is stored in the knowledge base.

Multi-Query RAG

Use case: Comparative Analysis

Decomposes complex questions into independent sub-questions, retrieves context for each sub-question separately, and synthesizes a unified answer. Ideal for questions that span multiple topics or require comparison.

Context-Aware Chunking

Use case: Technical Manuals

Splits documents along semantic boundaries (headings, paragraphs, sections) rather than fixed token counts. Preserves the logical structure of documents, ensuring each chunk contains a complete, coherent unit of information.

Late Chunking

Use case: Academic Papers

Processes full documents through the embedding model first, then splits the resulting token embeddings into chunks. Each chunk retains awareness of the full document context since embeddings were computed holistically before splitting.

Hierarchical RAG

Use case: Large Codebase Navigation

Uses two-level indexing: first retrieve high-level summaries to identify relevant documents, then drill into detailed chunks within those documents. Enables efficient coarse-to-fine retrieval over very large corpora.

Fine-Tuned RAG

Use case: Biomedical Literature

Fine-tunes the embedding model or the generation model on domain-specific question-answer pairs. Adapts the retrieval and generation components to the vocabulary, concepts, and reasoning patterns of a specific domain.

HyDE (Hypothetical Document Embeddings)

Use case: Vague Query Resolution

Generates a hypothetical answer to the query, then uses that answer as the retrieval query instead of the original question. By embedding a plausible answer rather than a short question, retrieval quality improves significantly for underspecified queries.

RAG-Fusion

Use case: Multi-Rephrase Combination

Generates multiple query variants, retrieves independently for each, and combines results using Reciprocal Rank Fusion (RRF). This reduces the impact of any single query formulation and produces more robust retrieval results.

Self-RAG

Use case: Adaptive Chatbot

The model self-reflects at each generation step, deciding whether retrieval is needed, evaluating the relevance of retrieved documents, and verifying that its response is properly grounded. Introduces learned reflection tokens for autonomous quality control.

Corrective RAG (CRAG)

Use case: Internal Wiki with Web Fallback

Grades retrieved documents for relevance and falls back to web search if the internal knowledge base returns insufficient results. Includes a knowledge refinement step that strips irrelevant content from retrieved passages before generation.

Adaptive RAG

Use case: Help Desk Query Routing

Classifies incoming query complexity and routes to the appropriate retrieval strategy: simple queries use direct retrieval, moderate queries use query expansion, and complex queries are decomposed into sub-questions. Optimizes cost and latency by matching strategy to need.

When to Use Which Strategy

Improving Retrieval Quality

Re-Ranking (post-retrieval scoring), Contextual Retrieval (chunk-level context), Late Chunking (holistic embeddings), Fine-Tuned RAG (domain adaptation)

Handling Complex Queries

Query Expansion (vocabulary gap), Multi-Query RAG (decomposition), HyDE (vague queries), RAG-Fusion (multi-rephrase RRF)

Scaling to Large Corpora

Hierarchical RAG (two-level indexing), Context-Aware Chunking (semantic splitting), Knowledge Graph RAG (entity-relationship traversal)

Self-Correcting & Adaptive Systems

Self-RAG (reflection tokens), Corrective RAG (web fallback), Adaptive RAG (complexity-based routing), Agentic RAG (autonomous retrieval decisions)

Security Considerations for RAG Systems

RAG pipelines introduce unique attack surfaces that traditional applications don't have. Every strategy in this repository follows defensive practices:

- No hardcoded secrets — All API keys are read from environment variables, never committed to source control

- Context grounding — Responses are grounded in retrieved content to prevent hallucination and reduce prompt injection risk

- No arbitrary code execution — None of the strategies execute dynamically generated code, eliminating code injection vectors

- Minimal dependencies — Only well-maintained, widely-used libraries are included to reduce supply chain risk

- Input validation — Review retrieved content before passing to the LLM to prevent indirect prompt injection attacks

Repository Structure

ml-rag-strategies/

├── README.md

├── RAG_LIBRARIES.md # Companion guide to RAG libraries

├── requirements.txt # Shared Python dependencies

├── architecture/ # SVG architecture diagrams

│ ├── 01_reranking.svg

│ ├── 02_agentic_rag.svg

│ ├── ...

│ └── 15_adaptive_rag.svg

└── src/ # Python implementations

├── reranking/ # Strategy 01

├── agentic_rag/ # Strategy 02

├── knowledge_graph_rag/ # Strategy 03

├── contextual_retrieval/ # Strategy 04

├── query_expansion/ # Strategy 05

├── multi_query_rag/ # Strategy 06

├── context_aware_chunking/# Strategy 07

├── late_chunking/ # Strategy 08

├── hierarchical_rag/ # Strategy 09

├── fine_tuned_rag/ # Strategy 10

├── hyde_rag/ # Strategy 11

├── fusion_rag/ # Strategy 12

├── self_rag/ # Strategy 13

├── corrective_rag/ # Strategy 14

└── adaptive_rag/ # Strategy 15Also see RAG_LIBRARIES.md for a companion guide to the libraries and frameworks used across strategies.

Explore the Code

All 15 strategies are open-source with runnable implementations and architecture diagrams. Clone the repository, install dependencies, and start experimenting with RAG patterns.