> ls ~/open-source --contributions

Open-Source Contributions

Building security frameworks, tools, and research in the open. Contributing to the community that defends AI/ML systems and application security at scale.

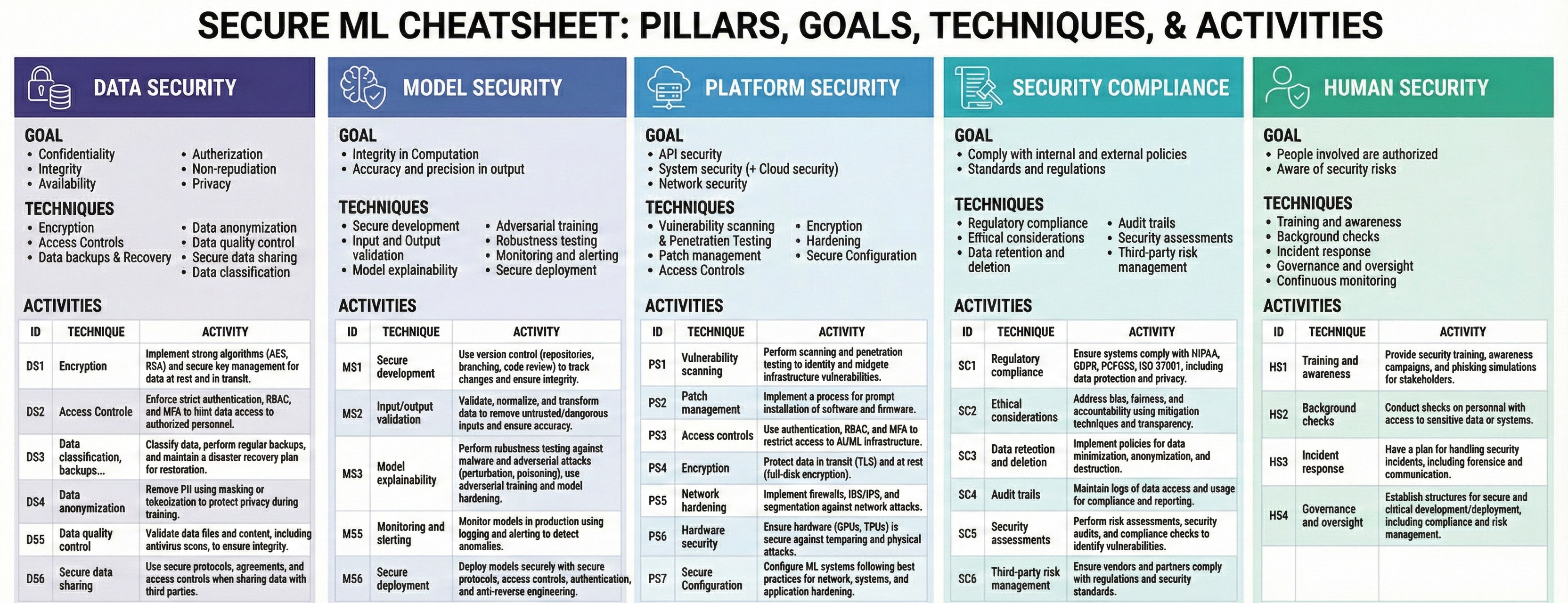

Secure-ML Framework

thalesgroup/secure-ml

A comprehensive framework for securing machine learning systems across the entire ML lifecycle. Developed as an industry-leading resource, it provides security policies, threat models, privacy-preserving techniques, and a curated collection of 40+ open-source security tools for ML.

- >ML Security Policy framework covering datasets, models, platforms, and compliance

- >Privacy-preserving techniques: Differential Privacy, Federated Learning, Homomorphic Encryption, SMPC

- >40+ curated open-source tools for adversarial security, LLM security, bias/fairness, and monitoring

- >ML threat taxonomy covering training data, model, and inference attack surfaces

- >Agentic AI threat comparison (CSA vs OWASP frameworks)

- >Presented at OWASP LASCON 2024 conference

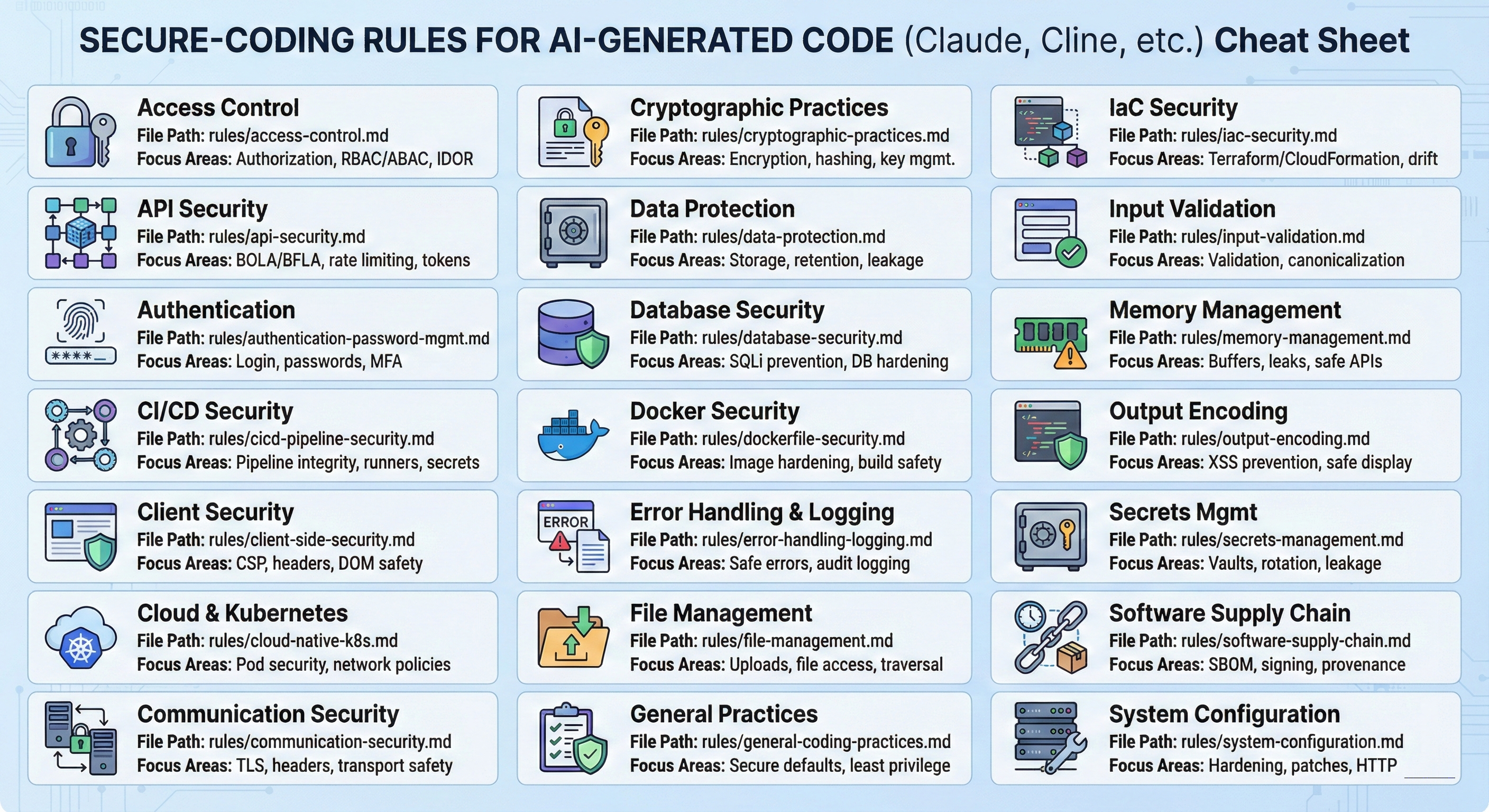

Secure Coding Practices (Markdown)

vchirrav-eng/owasp-secure-coding-md

A machine-readable, Markdown-optimized implementation of the OWASP Secure Coding Practices Quick Reference Guide (v2.1), extended with modern security domains including API Security, Cloud/Kubernetes, CI/CD, Supply Chain, IaC, and Secrets Management. Designed specifically for AI agents (Claude Code, GitHub Copilot) and LLMs to enable token-efficient, context-aware security audits and code generation.

- >22 modular rule files covering Access Control, API Security, Authentication, CI/CD, Cloud/K8s, Docker, IaC, Secrets Management, Supply Chain, and more

- >3 integration options: CLAUDE.md persona reference, Claude Code Skills (audit & generate), or MCP server (Docker & Docker Compose ready)

- >Two dedicated Claude Code skills: /secure-coding-audit for code review with findings tables, and /secure-coding-generate for producing secure code with inline Rule ID citations

- >MCP server exposing 22 resources and 3 tools (list_rules, get_rule, audit_checklist) with Docker, Docker Compose, and native Node.js deployment

- >Each rule follows a consistent 6-field pattern: Identity, Rule, Rationale, Implementation, Verification, Examples

- >Automatic domain detection in Skills mode — identifies relevant security domains from the code being audited or generated

- >Optimized for Just-In-Time context injection into LLM workflows without exhausting token budgets

Agentic AI Design Patterns

vchirrav-eng/agentic_ai_design_patterns

A practical reference covering Single-Agent Systems (SAS) and Multi-Agent Systems (MAS) design patterns for building secure, production-grade agentic AI applications. Documents architectural stages, inter-agent communication protocols (MCP, A2A, ACP), and security considerations at each layer. Companion repository to the blog post on agentic AI evolution.

- >Single-Agent System (SAS) patterns: tool use, memory management, context injection, and guardrails

- >Multi-Agent System (MAS) patterns: orchestrator/worker, peer-to-peer, and hierarchical agent topologies

- >Inter-agent protocol coverage: MCP, A2A, ACP, and AGNTCY

- >Security considerations at each architectural stage: scope limits, approval gates, kill switches

- >Companion to the blog post: The Evolution of Agentic AI Development

ML RAG Strategies

vchirrav-eng/ml-rag-strategies

A comprehensive reference covering 15 Retrieval-Augmented Generation (RAG) strategies with Python implementations, architecture diagrams (SVG), and security best practices. Covers everything from basic RAG to advanced techniques like Agentic RAG, Knowledge Graph RAG, Self-RAG, and Adaptive RAG. Companion repository to the blog post on RAG strategies.

- >15 RAG strategies: Re-Ranking, Agentic RAG, Knowledge Graph RAG, Contextual Retrieval, Query Expansion, Multi-Query, Context-Aware Chunking, Late Chunking, Hierarchical RAG, Fine-Tuned RAG, HyDE, RAG-Fusion, Self-RAG, CRAG, Adaptive RAG

- >Python implementations for each strategy with runnable examples

- >Architecture diagrams (SVG) visualizing retrieval flows and component interactions

- >Security best practices: vector database hardening, document poisoning prevention, retrieval boundary controls

- >Companion to the blog post: 15 RAG Strategies Every AI Engineer Should Know

Claude Code Token Consumption Dashboard

vchirrav-eng/claude-code-token-dashboard

A local-only, real-time token usage dashboard for Claude Code sessions. Provides live per-exchange token breakdowns (input, cache-read, cache-written, output) across all sessions, with a session picker and zero external dependencies. Runs as a lightweight Node.js server reading Claude Code JSONL session files directly.

- >Live per-exchange token breakdown: input, cache-read, cache-written, and output tokens

- >Session picker — browse and compare all Claude Code sessions from ~/.claude/projects/

- >Zero npm dependencies — pure Node.js server, no build step required

- >Auto-start via Claude Code SessionStart hook for seamless integration

- >Cumulative session totals with per-exchange drill-down for cost analysis

ML Research: Local LLM Fine-Tuning

vchirrav-eng/ml_research

Hands-on research into local LLM fine-tuning using HuggingFace Transformers, PEFT/LoRA adapters, and Unsloth for efficient training. Demonstrates the full pipeline from fine-tuning to GGUF conversion and local deployment via Ollama, optimized for NVIDIA Blackwell GPUs.

- >Fine-tuning TinyLlama-1.1B with 4-bit QLoRA on cybersecurity domain data

- >LoRA adapter training with rank-16, targeting all attention and MLP projection layers

- >Full pipeline: fine-tune → merge adapters → convert to GGUF (Q8_0) → deploy with Ollama

- >Optimized for NVIDIA RTX 5060 (Blackwell sm_120) with bfloat16 precision

- >Training completed in ~24 seconds with 64.57% mean token accuracy

- >Reproducible setup using uv package manager on Windows